How to deploy Apache HBase?

Gepubliceerd: Auteur: Rodrigo Agundez Categorie: Java & WebHBase is an open source, non-relational, distributed column-oriented database built on top of HDFS. Specially implemented for real-time read and write random access to very large datasets.

Chad Walters and Jim Kellerman at Powerset started the project in 2006 by modeling the database after Google’s Big Table. Since the beginnings of the project, HBase was developed to scale linearly by just adding nodes to the cluster. Then in 2010, became an Apache Top level project.

Requirements to deploy HBase:

- Java

As Hadoop, HBase is written in Java. So an appropriate Java version should be installed. - Hadoop HDFS

As mentioned, HBase is built on top of HDFS. To take full advantage of HBase, we need to point it at the HDFS cluster it should use, therefore requiring a Hadoop HDFS installation.For testing and experimenting, HBase can write to the local filesystem, which is the default configuration. In this case no HDFS installation is required. - Zookeeper

The internode communications are handled by Zookeeper. HBase ships with its own Zookeeper installation, but it can also use an existing ensemble.

Data model

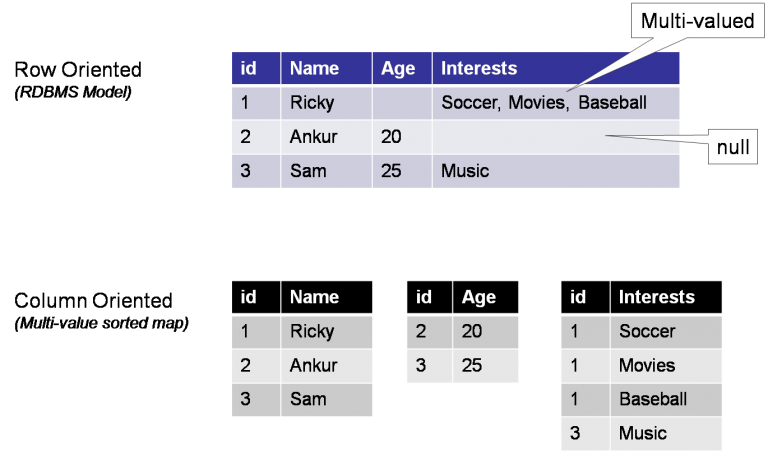

Column oriented database

This is just an example of how to view a column oriented database, it does not describe precisely the HBase data storage:

Concepts

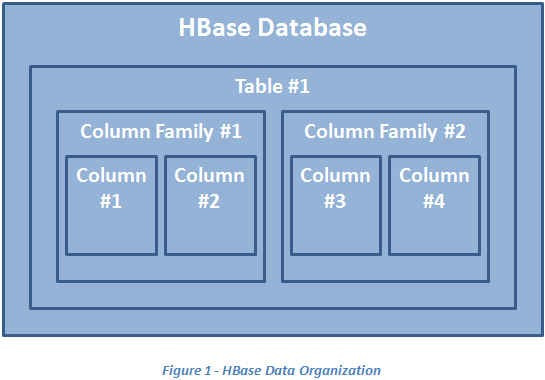

Table: outhermost data container

A table in HBase is the outermost data container. A difference compared to a relational database table, is that the inner structure is not rigid. If a comparison had to be made, a table will be like a database in relation database language.

Column family members stored together

A column family can be viewed as a table in relational databases with the difference that different rows can have a different amount of columns. This implies that NULL entrances in HBase do not exist.

Table families must be specified in advance as part of the schema definition, but new column family members (columns) can be added on demand.

All column family members are stored together in the same filesystem.

Columns

Row columns are grouped into column families, where all column family members share the same prefix.

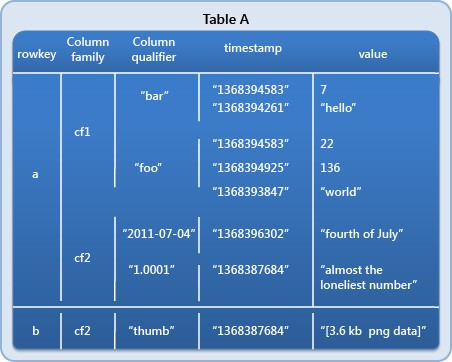

Row Key aka Primary Key

Each table must have an element defined as a Primary Key. All attempts to access data inside HBase tables must use this Primary Key.

Sorted table rows

Table rows are sorted automatically by row key. As data will be partition into regions according to the row keys:

Versioned cells

Table cells are versioned. By default their version is a timestamp auto-assigned by HBase. Hence each single data entry becomes a (keyvalue) pair.

Regions

Tables are separated in regions, and the regions are distributed along an HBase cluster. As the table grows, so does the number of regions.

Data insertion

Updates to tables are atomic, no matter the size of the row-level transaction.

HBase: built on JRuby shell

HBase does not provide a similar SQL query language. HBase shell is built on the JRuby shell, so you can write scripts that employ all of the JRuby’s shell’s resources. Also a Java API is available to manage HBase.

Performance versus Cassandra

In another report on Cassandra, I have made the comparison between these two, apparently the clear winner is Cassandra. You can read this report in my second blog.

A superior system?

A small report by DataStax, a leader in database management, mentions Cassandra as a superior system, and even goes as far as to state that HBase is used just because a Hadoop system that implements it is already in place:

“HBase is sometimes used for an online application because an existing Hadoop implementation exists at a site and not because it is the right fit for the application. HBase is typically not a good choice for developing always-on online applications and is nearly 2-3 years behind Cassandra in many technical respects.”

Very good understanding of hbase. I believe, i am having now after reading your blog. The information you provided is very helpful for <a href="https://mindmajix.com/hbase-training">Hbase Learners</a>.

Noice!!!!!

VERY NOICE!!!!!